Welcome

Foreword

Our approach to the retina is to learn about its component parts at all levels from a single molecule to an entire neuron. We also make and test hypotheses about the retina's algorithmic design. To these ends, our group's primary investigators are working collaboratively on 5 projects.G protein mediated information transmission

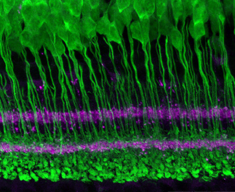

Photoreceptors are at the interface between physical energy and electrical signals. Within the photoreceptor, light is absorbed by a photopigment. The photopigment then activates a G-protein, so called because it binds guanosine nucleotides as source of energy. Activated G protein initiates a cascade of molecules that open ionic channels which let current flow. Bipolar cells have a similar G protein cascade but our knowledge of its components is incomplete and confused. Our approach is to knock out the genes expressing a cascade component and then, by observing the effects on signal transmission, determine the function of the component.Primary Investigator: Noga Vardi

Directionally selective neurons

Ganglion cells are the final output of the retina and come in a variety of sizes and specifications. Directionally selective ganglion cells (DS cells) respond to movement along a line. DS cells send information to parts of the brain involved in scanning the scene and in tracking movement. DS cells receive input from amacrine cells that are sensitive to radial motion — like the circular waves pushed up by a pebble dropped into a pond. We are investigating how the circular sensitivity is converted to a linear one. Our approach is to make computational models that incorporate details about the retina at all levels, from single molecules to complete circuits.Primary Investigator: Robert G Smith

Adaptation to the statistics of visual scenes

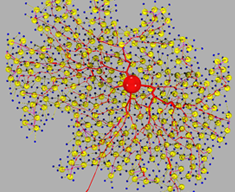

The retina of a person reading a book outside on a sunny day absorbs about 100 million million photons each second (1014 photons s-1). During this second the retina transmits to the brain about 10 million bits of information (107 bits s-1). Comparison of input to output reveals that a photon is worth only a very small fraction of a bit (10-7). One reason for the photon's depreciated bit content is that photons are correlated in time and space, which means that the absorption of one photon can be used to infer when and where another photon has been absorbed. Therefore, the retina circuit eliminates correlations between photons. Because the strength of correlations changes from visual scene to scene, we hypothesize that the retina adapts to these changes by altering its processing algorithm. To better understand these adaptive changes, we record from multiple ganglion cells using a multielectrode array.Primary Investigator: Vijay Balasubramanian

Visual processing during eye movements

Natural scenes are rendered like a photographic image across the photoreceptor layer, but as the resulting electrical signals pass through different levels of the visual system, the image is transformed significantly. We hypothesize that these transformations make use of spatiotemporal correlations inherent in natural scenes to predict the future from the present. Additionally — to continue the photographic analogy — the visual system must assemble a monolithic representation of the world from a series of "snap shots" as the eye flits over the scene. Yet almost all investigations of the retina stabilize the eye and most use artificial images. Therefore, we record from visual thalamus in awake animals as they view natural scenes. Contemporaneous with these recordings, we record eye movements and use them to reconstruct the succession of images on the retina. By these means we hope to better understand how the visual system transforms the visual image, and how these transformations are influenced by eye movements.Primary Investigator: Dawei Dong